With the emerged need to extend cloud computing paradigm to more resources (especially in the last decade) the expression “fog computing” appeared although it does not have yet a commonly agreed definition. According to NIST, the fog computing is defined as “an horizontal, physical or virtual resource paradigm that resides between smart end-devices and traditional cloud or data centers. This paradigm supports vertically-isolated, latency-sensitive applications by providing ubiquitous, scalable, layered, federated, and distributed computing, storage, and network connectivity.” Therefore is a paradigm that includes cloud, data centers and less capable devices residing at the edge of the network, or in an even simpler way it can be seen an extension of the cloud to the edge — to end nodes and devices.

This means that to achieve fog computing, PrEstoCloud assists with deployment capabilities that allow the distributed and scalable deployment of services that need computation, storage and network connectivity.

Cloud Deployment

By using PrEstoCloud, cloud deployment is possible to a variety of public Cloud providers or even on private clouds .This is possible through the Autonomic Data-Intensive Application Manager (ADIAM) where workflows are used in order to provision new VMs, deploy and configure the PrEstoCloud applications:

- VM Provisioning workflow

- VM Configuration workflow

- Application finalization and startup workflow

The aforementioned workflows have been designed to be generic enough to support different types of Cloud infrastructures and regions, types of applications and their relevant configurations.

Edge Deployment

With cloud deployment already in place, PrEstoCloud is extending seamlessly the deployment to the edge devices in order to achieve the fog computing. The edge devices as part of PrEstoCloud are in line with the definition of OpenFog consortium, and we treat edge device in general as a physical entity that is embedded, attached or close to other physical entities in its vicinity, with capabilities to convey digital information from or to that physical entities. In addition we also used extreme edge that refers to the farthest connected points of the network, closest points to where operational data is generated.

In PrEstoCloud we need to combine cloud, data center and edge resources to achieve fog characteristics, and therefore we shall provide the capability to run applications independently of the underlying infrastructure. The execution is performed on the PrEstoCloud nodes. A PrEstoCloud node is a single physical or virtual node with compute, storage, and network resources that interoperates with other nodes through common management, orchestration, and other fog computing service interfaces provided by PrEstoCloud.

Fog Deployment

To concretize this architecture and provide the ability to run applications on top of this fog deployment we use OS virtualization (containerization), and specifically with the deployment of Docker images. A Docker container image is a lightweight, stand-alone, executable package of a service which includes all required code, system tools, system libraries, settings, etc. In order to create a container image, a file named Dockerfile needs to be prepared. A Dockerfile consists of consecutive steps on how to create a specific Docker image. In other words, a Dockerfile says what base image should be used, which dependencies need to be downloaded, what commands have to run to execute the containerised application.

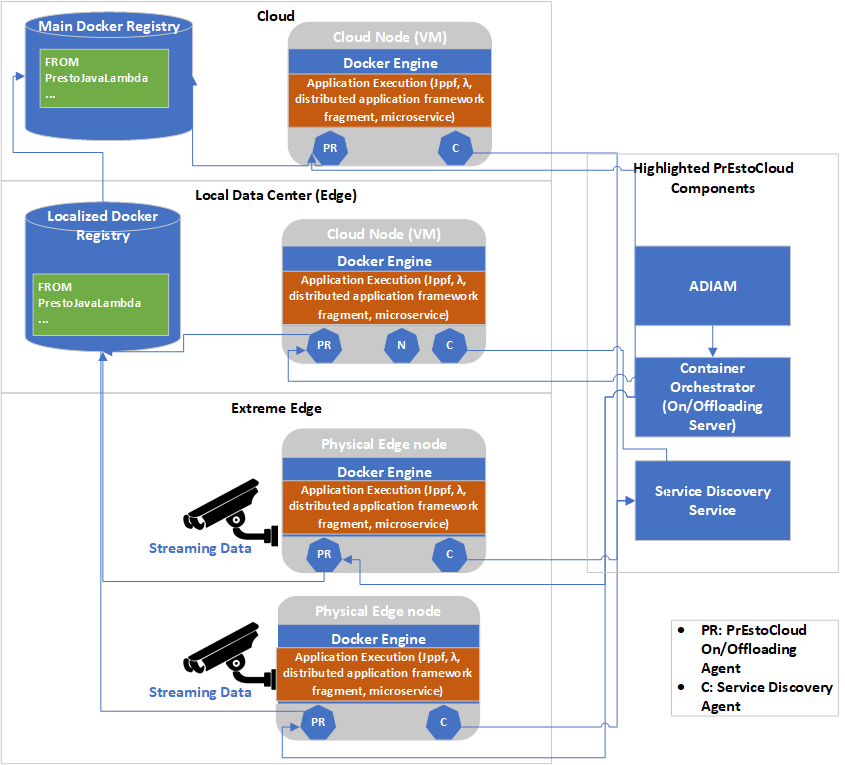

To achieve fog deployment, at first we extended the cloud deployment and management characteristics of the Autonomic Data Intensive Application Manager (ADIAM), with the creation of an Edge Deployment and Management component that is responsible for the management of edge resources. Then a container management solution has been developed as described in PrEstoCloud D3.7 Mobile Offloading Processing Microservice that describes the mechanism that allows shifting parts of processing on the resources at the extreme edge of the network. This mechanism is composed by a on/offloading server that connection that clients that connects with ADIAM and instructs the deployment of containers in edge devices that have installed a specific client. By using this mechanism at both edge and cloud resources, application code can be distributed using docker containers.

The Edge On/Offloading Server provides the edge node registration API, allowing the On/Offloading Client installed on every edge resource for the registration of edge node. It has to be mentioned that as the Edge On/Offloading Server only connects to a single point with the ADIAM by an API and the goal is to make this functionality pluggable, in the sense that more than one implementations of the Edge On/Offloading mechanism can be used, provided that the defined API is respected.

An overview of the deployment of containers in both cloud and edge resources is depicted in the figure below. All edge resources that are available and contain PrEstoCloud Agent, contain the Service Discovery Agent and the On/Offloading Client. This means that the edge node once booted is registering itself to the Service Discovery Service (that is a part of the Cloud and Edge Repository) and then On/Offloading Server is able to instruct the On/Offloading Client to fetch the appropriate docker image from the closest docker registry and run it.

This way, PrEstoCloud can support applications build using the micro-service paradigm, applications following the Function as a Service (FaaS) paradigm through the usage of anonymous (λ – lambda) functions, applications build using distributed computing frameworks (storm, spark, jppf) and in general is able to support the execution of any application capable of exploiting the distributed deployment paradigm.

In order to properly realize this deployment of each of these frameworks, additional components might be needed as part of the application and then using the PrEstoCloud annotations and the TOSCA (Topology and Orchestration Specification for Cloud Applications) file the deployment shall be described in order to allow ADIAM to create the proper deployment workflows. The execution of these workflows and the consequent deployment of appropriate container images will enable the cloud and edge deployment.

Network and Storage for Fog Computing

As mentioned already, the notion of fog computing architecture includes not only the computational resources, but also the network and storage capabilities. For achieving the cloud to edge connectivity that can be used from the applications, a common network space should be used, and usually this is performed through a private IP space. To achieve this private IP space, PrEstoCloud provides two different and supplementary solutions. The first is the ability to create a Virtual Inter‐site Network Overlay that can be used among different cloud providers, but also in edge resources (with the usage of a VPN client as OpenVPN). This solution is the PrEstoCloud VPN Gateway and the complete documentation for the architecture, implementation and usage of it can be found in deliverable D3.3. The second solution that has been created in order to enhance the connectivity especially on the edge devices, is the mesh networking stack that has been created in the scope of task 3.5 and is described in deliverable D3.9, that allows the creation of IPv6 address space. These solutions can be used separately or combined for the creation of fog deployment.

Finally, although storage can be retrieved on Cloud and datacenter nodes, PrEstoCloud also offers a sophisticated solution of temporal storage based on the principles of a Distributed Hash Table (DHT). DHT is a data structure that is created and maintained by many network participants without any centralization, thus it can be totally distributed structure since it does not have the necessity of a leader. As described in deliverable D3.9, by using the PrEstoCloud Device Stack (PDS) application developers can exploit the DHT above fog resource in order to be able to read and write.